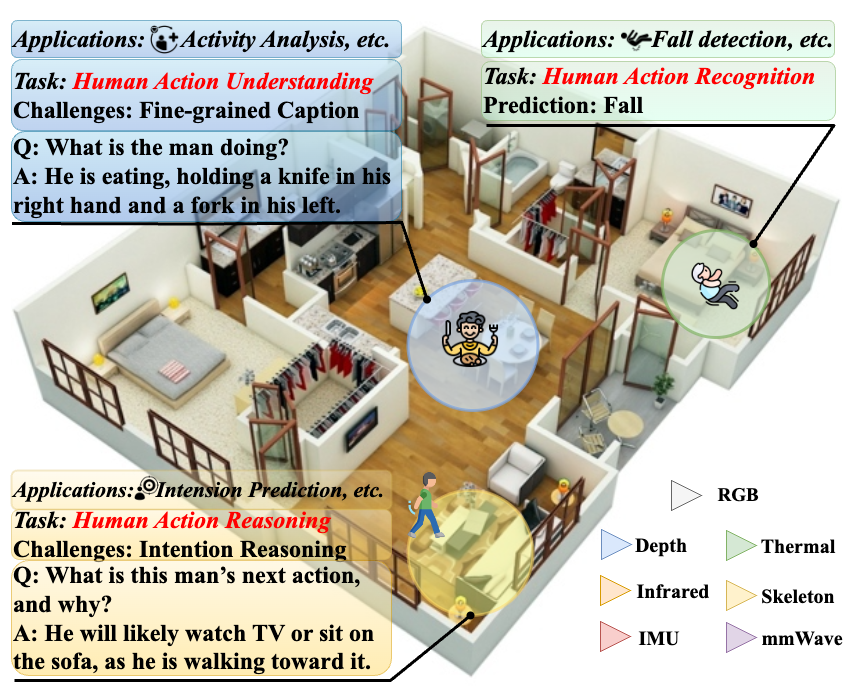

CUHK-X

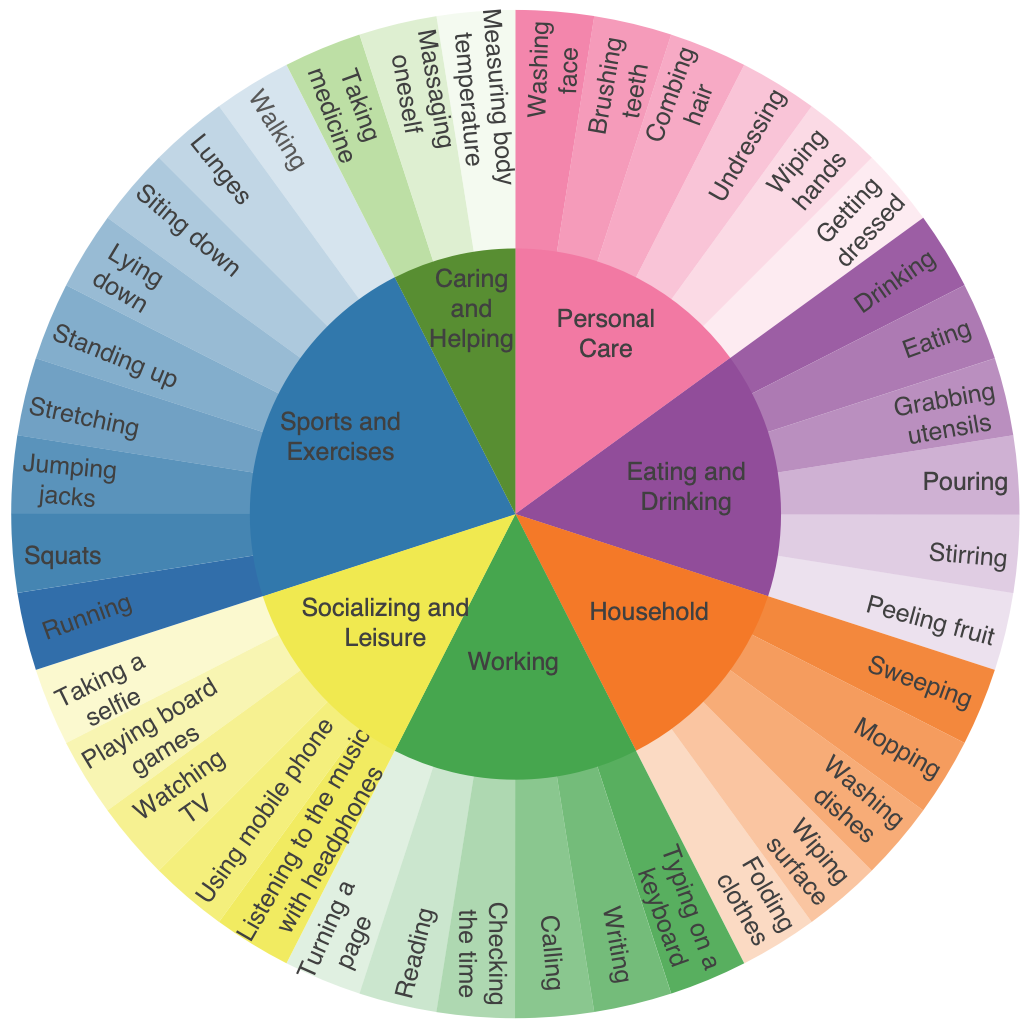

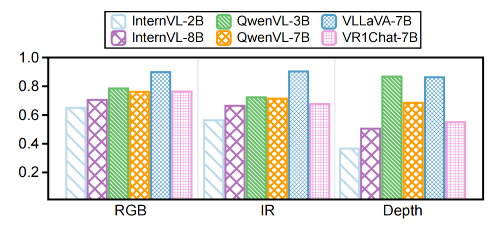

A Large-Scale Multimodal Dataset and Benchmark for Human Action Recognition, Understanding and Reasoning

CUHK

CUHK

UIUC

UIUC

Columbia University

Columbia University

PITT University

PITT University

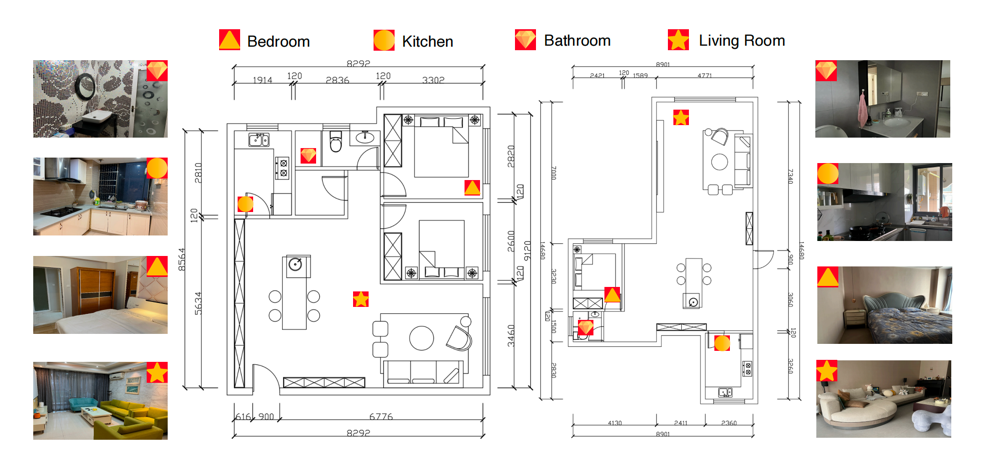

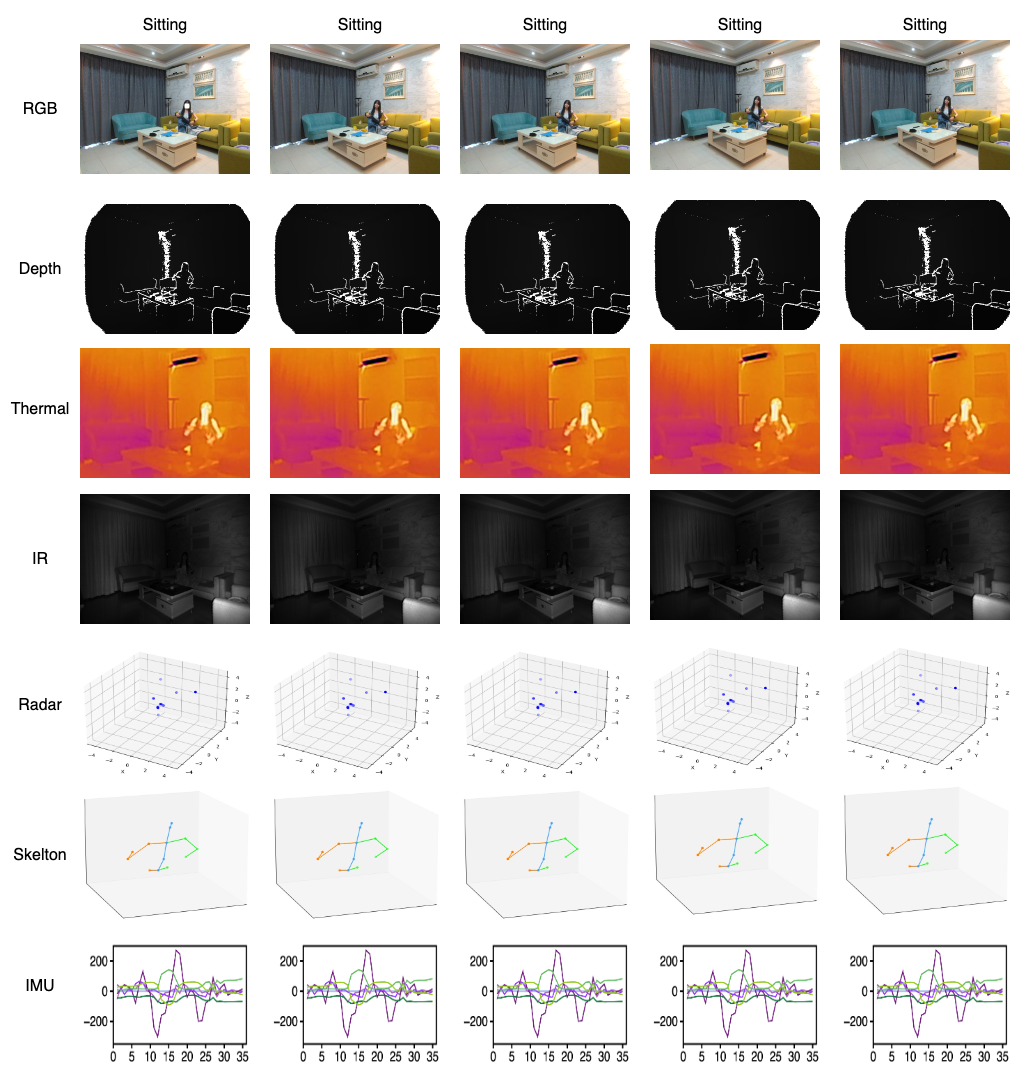

We have released CUHK-S (sample subset of CUHK-X). As we are preparing the CUHK-X competition, the full CUHK-X dataset will be released soon. The main differences are that CUHK-S includes only 18 users and excludes the RGB modality. We welcome the community to use it and share feedback.